A few weeks ago, Simo Roikonen wrote in his excellent blog post "Data streams are not serenely flowing rivers - they arrive in waves" that in order to handle massive streams of data better, you need a distributed system for a more responsive and elastic solution. Distribution indeed solves the problem, and if you want it to succeed, you need to be aware of the challenges you will face when building reliable distributed systems.

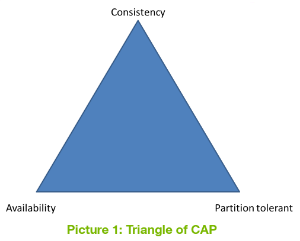

One of those challenges arises from the CAP theorem introduced by Eric Brewer.  CAP stands for: Consistency, Availability and Partition tolerance. Those are three different guarantees you want to achieve with your system, as illustrated in Picture 1.

CAP stands for: Consistency, Availability and Partition tolerance. Those are three different guarantees you want to achieve with your system, as illustrated in Picture 1.

Unfortunately, according to the theorem, in distributed systems you can select only two properties out of these three. And, in fact, you cannot avoid partitions in the networks. This means that you should select either CP or AP, as you cannot sacrifice partition tolerance in distributed systems.

The CAP theorem is a bit of a simplified view when it comes to distributed systems, but it offers a glimpse into the kinds of decisions you will need to make when implementing them. There are a number of other decisions that also need to be made (e.g. failure handling, latencies etc.),  but let's consider here only those related to the CAP theorem.

but let's consider here only those related to the CAP theorem.

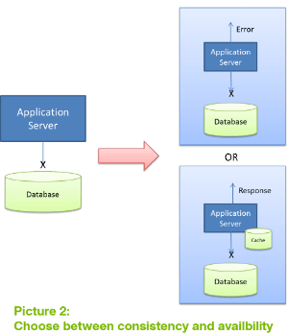

To take a simple example, you have an application server and a database in the network, and the network is partitioned so that the application server loses the connection with the database. As illustrated in Picture 2, there are two options: to not respond to the client anymore or to use some caching to provide information locally and merge the changes later on into the database when the connection is available again.

Which one do you choose?

Which one do you choose?

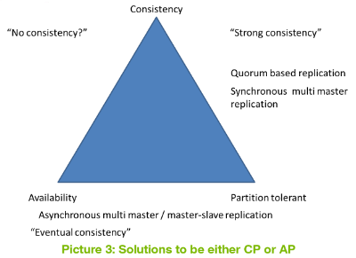

So, if you need to choose either consistency during network partitions or availability during network partitions, how do you know which one to choose? That, of course, depends on your requirements. Picture 3 shows some typical ways to achieve either CP or AP.

Consistent during network partition

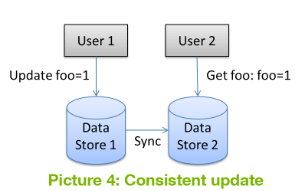

If you are familiar with the term consistency from ACID operations in the context of relational databases, you might be a bit confused as it means a bit different thing in a CAP context. With distributed systems it means that once the information is written, all users see the same changes in the same order, regardless of the node the data is located on. This is also referred to as linearization. This is illustrated in Picture 4, and it means that after user 1 has made the update, user 2 cannot get the old value anymore, but can see only the updated value, even if user 2 uses a different data node.

There are different ways to achieve consistency during a network partition. The naively simple solution is to not be available at all during network partitions. This approach is typically used in synchronous multi-master replication.

There are different ways to achieve consistency during a network partition. The naively simple solution is to not be available at all during network partitions. This approach is typically used in synchronous multi-master replication.

Another way to resolve this issue is to use a quorum-based algorithm. This is quite a typical approach in clustered environments that are partition-tolerant; it is used, for instance, by ZooKeeper. This means that the data is written successfully if the majority of the nodes in the cluster approves it; and if the majority is not available, the write fails. In order to achieve this, you must use an odd number of nodes in the cluster, because with even numbers you may have a split network with an equal number of nodes on both sides of a separated network and, therefore, both networks will be assumed to have a majority of the nodes, which can lead to an inconsistent state when the network is recovered.

This mode loses availability during the network partition, since operations can fail. Some systems, however, may relax the consistency level in order to achieve better availability and allow reading from minority nodes, but then the clients may see different results, depending on the node the data is located on.

Available during network partition

Availability in the context of CAP means that all nodes give a response to all requests even during network partition, which could potentially mean stale reads and conflicting writes. After the network partition has healed, the system can do a merge from different nodes. Merge algorithms may vary and some might take a last-write-wins approach, while others use vector clocks or, like Cassandra, a column-based approach.

Another way to resolve the issue is to use Conflict-free Replicated Data Types that provide strong eventual consistency.

Is the world so black and white?

The short answer is no. There's a great blog post by Martin Kleppmann about why you shouldn't even evaluate databases based on CAP capabilities: "Please stop calling databases CP or AP". The main argument is that the CAP theorem is too simple and doesn't meet the requirements that most systems have; you can, for example, fulfill the SLA requirements with a system that doesn't provide CAP availability, and the CAP theorem says nothing about latencies.

When analyzing current products that claim to be highly available, you will find out that they are not available like what is meant in CAP. Those are only P with high availability flavors. Like Master/Slave (i.e. single leader), where the writes are done using the master, but the reads can be done from slaves. A good example of this is MongoDB replication. During a network partition where the client can see only a slave node, it can read the data from the slave but can't write, as writes are done through the master. However, the data might not be consistent, since the master may have newer data, but because of the network partition, it's not available in the slave yet. This doesn't mean that you can't use these products. Network partitions are not so frequent, which means you can normally live with them without risking your SLAs or user experience. Normally what you don't meet in the SLAs are the latency requirements, and the CAP theorem doesn't address that at all. The system might be CAP-available even if the response comes back after one minute, but you wouldn't call that a highly available system.

Databases that claim to be fully consistent (i.e. CP in CAP terms) also behave badly during network partition. There's a great blog series, "Call me maybe", that analyzes different data storages and how they behave under network partition. Even a simple setup where you have a client and an RDBMS can end up in an inconsistent state on the system level, even though the database is in a consistent state.

So are all these systems useless?

No, they are not sloppy systems. In fact, they are used a lot in production and work extremely well, but they just don't meet either CP or AP in a CAP context.

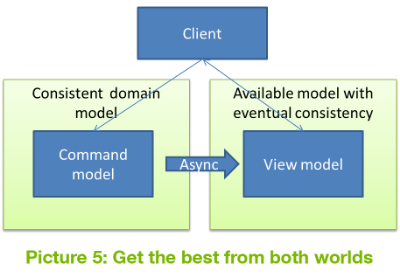

One thing to remember is that you can split your system into different parts and select different CAP strategies for them. Your system can be CP on the write side and AP on the read side. To achieve this, you can build a system based on the CQRS pattern introduced by Greg Young, where you have separate models for your domain and for your views,  as shown in Picture 5. The domain model can provide consistency using, for instance, event sourcing, and the read model is asynchronously updated from the domain model, i.e. it will eventually be consistent and provide high availability.

as shown in Picture 5. The domain model can provide consistency using, for instance, event sourcing, and the read model is asynchronously updated from the domain model, i.e. it will eventually be consistent and provide high availability.

The system overall is neither CP nor AP, but it can have better latencies and still be highly available without a proper CAP stamp on it.

As you can see, there is no free lunch when using distributed systems to achieve a reactive, resilient, elastic and responsive system. Distributed systems set new challenges that you need to tackle. However, once you are aware of the challenges and know your requirements, you will be prepared to meet those challenges head-on.

Related articles:

Data streams are not serenely flowing rivers - they arrive in waves